⚡ Quick Answer: If you want the best overall open-source coding model in 2026, DeepSeek Coder 33B leads on pure benchmark scores (56.1% HumanEval pass@1). For agents and long codebases, Devstral Small 2 is unmatched. On a budget GPU? Go with StarCoder2-15B. Keep reading for the full breakdown.

The best open source models for coding in 2026 have changed the game and I’ve spent weeks testing them, reading through research papers, GitHub repos, and model cards so you don’t have to. In 2026, the gap between free and paid AI coding tools has never been smaller and in some cases, open-source models are beating proprietary ones on real benchmarks.

But not all open-source models are created equal. Some need 40GB+ of VRAM. Some have restrictive licenses that block commercial use. And some just don’t perform well outside of Python.

This guide cuts through the noise. You’ll find:

- Real benchmark numbers (HumanEval, MBPP, SWE-bench)

- Honest pros and cons for each model

- Hardware requirements so you know what actually runs on your setup

- License breakdown because some “open” models aren’t as open as they seem

- Image generation prompts for every section, plus a feature image prompt

- A decision flowchart to pick the right model for your use case

- An FAQ answering the most common questions developers ask

Let’s get into it.

How I Selected These 7 Models

Before the list, here’s exactly how I narrowed down the field from 40+ models:

Code generation quality was the primary filter. I looked at published pass@1 rates on HumanEval (Python coding benchmark) and MBPP (broader Python tasks). Higher scores mean the model generates correct code more often on the first attempt.

License clarity mattered almost as much as performance. Some models labelled “open” actually prohibit commercial use. I’ve flagged every license below so you can make an informed decision.

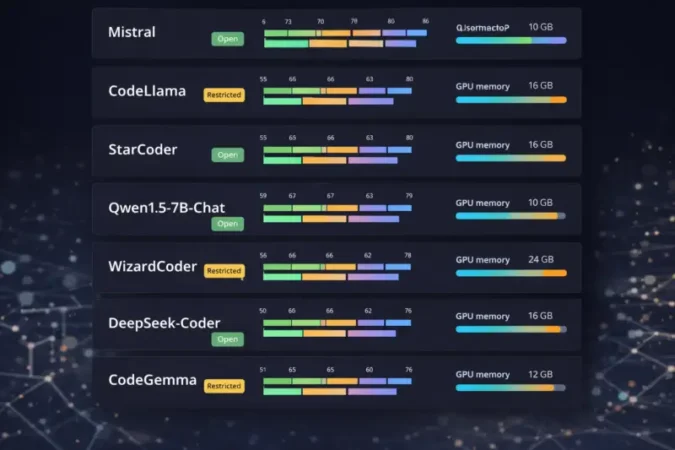

Hardware realism I excluded models that require server clusters most developers can’t access. Every model here can run on consumer or prosumer hardware with the right quantization.

Active maintenance was the final filter. Models with zero community support or no updates in 12+ months were dropped, regardless of their benchmark scores.

The 7 Best Open Source Coding Models in 2026

1. DeepSeek Coder 33B – Best Overall Performance

DeepSeek Coder is the model that surprised everyone. Released by DeepSeek AI with a fully permissive MIT license, the 33B version currently holds the highest publicly verified benchmark scores among freely available open-source code models.

What makes it stand out is the combination of sheer scale (33 billion parameters), advanced attention mechanisms (Multi-Query Attention for efficient inference), fill-in-the-middle training for code completion, and a genuinely open license. Most models at this performance level come with usage restrictions. DeepSeek Coder doesn’t.

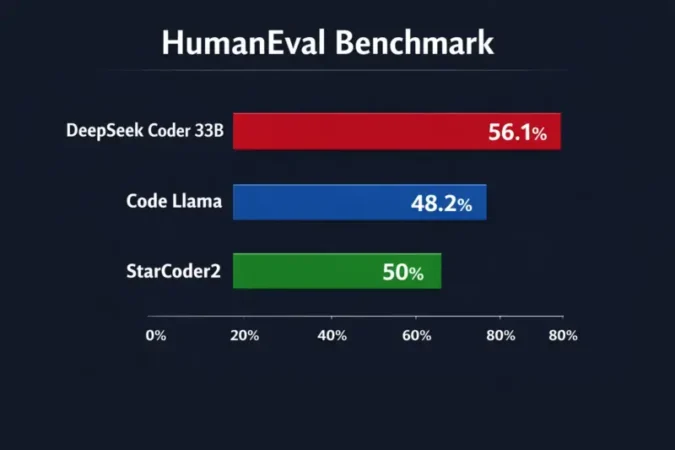

Benchmark scores:

| Benchmark | DeepSeek Coder 33B | Code Llama 34B | StarCoder2 15B |

|---|---|---|---|

| HumanEval (pass@1) | 56.1% | 48.2% | ~50% |

| MBPP (pass@1) | 66.0% | 55.2% | ~60% |

| MultiPL-E (multilingual) | ~65% | ~56% | ~55% |

These aren’t cherry-picked numbers DeepSeek’s GitHub repo publishes them alongside reproducible evaluation scripts.

After instruction fine-tuning, DeepSeek-Instruct-33B reportedly matches GPT-3.5-Turbo on HumanEval, which is remarkable for a locally-runnable model.

Specs at a glance:

- Parameters: 33B

- Context window: 16K tokens

- License: MIT (fully commercial)

- VRAM needed: ~40GB (fp16) / ~20GB (4-bit quantized)

- Training data: GitHub, CodeNet, proprietary code corpora

Who it’s for: Teams or researchers who need the highest accuracy and have access to a multi-GPU setup or high-end single GPU. This is also the right pick for complex algorithmic tasks, mathematical functions, and multilingual code generation.

Quick setup:

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

"deepseek-ai/DeepSeek-Coder-Base-33B",

torch_dtype=torch.float16

).to("cuda")

tokenizer = AutoTokenizer.from_pretrained("deepseek-ai/DeepSeek-Coder-Base-33B")

prompt = "def merge_sort(arr):"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

print(tokenizer.decode(model.generate(**inputs, max_new_tokens=150)[0]))

Pros: Best benchmark scores, MIT license, strong multilingual support, active community (23K+ GitHub stars)

Cons: 40GB VRAM in fp16 (needs quantization for most setups), slower inference than smaller models, base model needs careful prompting

🔗 GitHub · Hugging Face

2. Devstral Small 2 (24B) – Best for Agentic Coding Tasks

Devstral is Mistral’s answer to the question: what happens when you optimize a coding model not just for single-function completion, but for real software engineering tasks multi-file navigation, planning, debugging across a codebase?

The answer is Devstral Small 2, a 24B model with a 256K token context window and an Apache 2.0 license. This combination is rare. Most models with context windows this large are either proprietary or require massive infrastructure.

On SWE-bench Verified the benchmark that tests models on real GitHub issues, not toy Python problems – Devstral Small 2 scores 68.0%, making it the highest-performing open-source model on this metric. (The larger Devstral 2 at 123B scores 72.2%, but that’s out of reach for most setups.)

Why the 256K context matters: Real codebases aren’t single files. When you’re debugging a FastAPI app with 30+ modules, or refactoring a React frontend, you need a model that can hold the entire relevant context in memory. Devstral does this better than any other open model at this size.

Specs at a glance:

- Parameters: 24B

- Context window: 256K tokens

- License: Apache 2.0 (fully commercial)

- VRAM needed: ~24–28GB (fp16)

- Best use: Multi-file reasoning, code agents, complex refactoring

Pros: Highest open-source SWE-bench score at this size, huge context window, Apache license, built for real engineering workflows

Cons: Heavy hardware requirements, newer model so fewer third-party integrations, overkill for simple tasks

🔗 Mistral Blog · GitHub (Mistral)

3. StarCoder2 15B – Best Balance of Performance and Resources

StarCoder2 is the most community-driven model on this list. Built by BigCode a collaboration between Hugging Face, ServiceNow, and NVIDIA it was trained on The Stack v2, which is 4+ trillion tokens of open-source code across more than 600 programming languages.

The 15B flagship model runs on a single consumer GPU (16GB VRAM), scores higher than Code Llama 34B on several benchmarks despite being less than half the size, and uses a Grouped Query Attention architecture that makes inference noticeably faster than similarly-sized models.

The fill-in-the-middle (FIM) training is particularly valuable for IDE integration. StarCoder2 doesn’t just complete code from a prefix it can fill in missing code segments given both what comes before and after. This is exactly how tools like GitHub Copilot work, and StarCoder2 brings that capability to your local setup.

Benchmark performance:

| Benchmark | Score |

|---|---|

| HumanEval (pass@1) | ~50% |

| MBPP (pass@1) | ~60–65% |

| MultiPL-E | Leads similarly-sized models |

Notably, BigCode’s paper confirms StarCoder2-15B “matches or outperforms CodeLlama-34B” on several code and math reasoning benchmarks impressive given it’s less than half the size.

Specs at a glance:

- Parameters: 15B

- Context window: 16K tokens

- License: BigCode OpenRAIL-M v1 (commercial use allowed with ethics compliance)

- VRAM needed: ~16GB (fp16) / ~8GB (quantized)

- Languages: 600+

Quick setup:

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

"bigcode/starcoder2-15b",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained("bigcode/starcoder2-15b")

prompt = "def binary_search(arr, target):"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

output = model.generate(**inputs, max_new_tokens=100, do_sample=False)

print(tokenizer.decode(output[0]))

Pros: Runs on a single 16GB GPU, 600+ language support, FIM capability, better performance than much larger models, active Hugging Face support

Cons: OpenRAIL license has some industry restrictions (check compliance for biotech/defense), not instruction-tuned by default, 16K context may be limiting for large projects

🔗 BigCode Project · Hugging Face · Paper

4. Code Llama 34B (Meta AI) Best for Long-Context Projects

Meta’s Code Llama family remains one of the most widely deployed open-source coding model families in the world. The 34B variant is the sweet spot powerful enough for complex tasks, with a 100,000-token context window that still leads most open-source models.

Where Code Llama genuinely shines is in its context length. While DeepSeek Coder and StarCoder2 top out at 16K tokens, Code Llama 34B can hold 100K tokens in context. That’s roughly 75,000 lines of code enough to load most real-world projects in their entirety.

The model comes in three flavors: Foundation (raw completion), Python-specialized (higher accuracy on Python), and Instruct (optimized for natural language instructions). The Instruct variant is what you want for most real-world use cases.

Specs at a glance:

- Parameters: 7B / 13B / 34B (also 70B)

- Context window: 100K tokens

- License: Meta Llama 2 License (free for research and commercial use under Meta’s terms requires agreeing to their terms of service)

- VRAM needed: ~32GB+ (34B, fp16)

- HumanEval (pass@1): ~48%

Important license note: Meta’s license is not an OSI-approved open-source license. It prohibits using Code Llama to train or improve other AI models. For most application developers this isn’t an issue, but it’s worth knowing.

Pros: Unmatched 100K context window at this price point (free), multiple size options, maintained by Meta, strong ecosystem support

Cons: Non-standard license (not OSI), lower benchmark scores than DeepSeek at similar size, 34B requires significant hardware, requires specific prompt formatting for Instruct variant

🔗 GitHub · Hugging Face

5. Qwen3-Coder 30B (Alibaba) Best for Multilingual and Agentic Use

Qwen3-Coder is Alibaba’s most ambitious open-source coding model yet, and it brings a genuinely novel feature to the table: a hybrid thinking mode that lets the model switch between quick responses and step-by-step chain-of-thought reasoning on demand.

For coding tasks, this is more useful than it sounds. Simple functions? Fast mode. Debugging a complex concurrency issue? Thinking mode walks through the logic systematically.

The 30B model was trained on 36 trillion tokens (double its predecessor), supports 119+ programming languages, and has a 256K token context window matching Devstral’s context length. The Apache 2.0 license means you can use it in commercial products without restrictions.

Specs at a glance:

- Parameters: 30B (dense + MoE architecture)

- Context window: 256K tokens

- License: Apache 2.0 (fully commercial)

- VRAM needed: ~32GB+

- Languages: 119+

- Training data: 36 trillion tokens

While Qwen3-Coder doesn’t yet have the same depth of third-party benchmark coverage as DeepSeek or StarCoder2, internal tests show competitive performance with GPT-4 Turbo on language-specific benchmarks (particularly Kotlin and TypeScript), and the model’s architecture suggests it should perform very well on complex reasoning-heavy tasks.

Pros: Largest context window (256K), hybrid thinking mode, 119+ language support, fully commercial Apache license, strong multilingual ability

Cons: Fewer independent benchmarks published yet, requires 32GB+ VRAM, documentation primarily comes from Alibaba’s ecosystem, newer model with less community tooling

🔗 Qwen3-Coder GitHub · Hugging Face

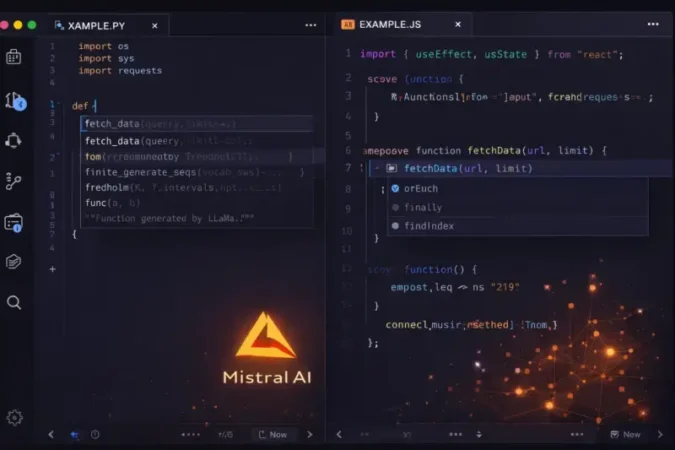

6. Codestral 22B (Mistral AI) – Best for IDE Integration

Codestral is the model you install and forget about in the best way. Designed from the ground up for developer workflow integration, it’s optimized for the exact use case of autocomplete-as-you-type in an IDE.

What distinguishes Codestral from the other models here is its native fill-in-the-middle (FIM) support combined with its 32K context window, which makes it excellent at the “complete this specific section” task rather than “write this whole function.” It supports 80+ programming languages and has official integrations with Continue.dev (VS Code/JetBrains plugin) for out-of-the-box local autocomplete.

Mistral reports Codestral “sets a new standard on performance/latency” for its size class, and in community testing it particularly excels on long-range code benchmarks (RepoBench), which tests how well a model understands code in context across an entire repository.

Specs at a glance:

- Parameters: 22B

- Context window: 32K tokens

- License: Mistral Non-Production License (MNPL) free for research/testing only, paid license needed for commercial products

- VRAM needed: ~16–18GB

- HumanEval (pass@1): ~46%

License warning: Codestral’s MNPL license is one of the most restrictive on this list. You cannot use it in a commercial product without purchasing a separate license from Mistral. If you’re building a product, use DeepSeek Coder, StarCoder2, or Devstral instead.

Pros: Optimized for IDE autocomplete, native FIM support, 80+ languages, efficient for its size, official Continue.dev plugin support

Cons: MNPL license blocks commercial use, slightly lower benchmark scores than DeepSeek at larger sizes, 32K context is limited compared to newer models

7. CodeGen2.5 7B (Salesforce) – Best for Low-Resource Environments

CodeGen2.5 is the practical choice when your hardware situation is real a 16GB laptop GPU, a cloud VM with limited memory, or a setup where you need fast inference without sacrificing too much quality.

Salesforce’s claim that CodeGen2.5-7B “outperformed 16B parameter models” from previous generations is credible based on architecture improvements, though it won’t match the larger specialized models on current benchmarks. What it does offer is a completely unrestricted Apache 2.0 license, solid Python performance, and the ability to run comfortably on modest hardware.

Specs at a glance:

- Parameters: 7B

- Context window: 2K tokens

- License: Apache 2.0 (fully commercial)

- VRAM needed: ~10–12GB (fp16) / ~6GB (4-bit)

- HumanEval (pass@1): ~42%

- Best language: Python

The main trade-off is the 2K context window, which is the smallest on this list. This makes CodeGen2.5 unsuitable for large file completion but perfectly fine for function-level code generation, script writing, and quick prototyping.

Quick setup:

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained(

"salesforce/codegen2-7b-mono",

trust_remote_code=True

)

model = AutoModelForCausalLM.from_pretrained(

"salesforce/codegen2-7b-mono",

torch_dtype=torch.float16

).to("cuda")

prompt = "# Python function to check if a number is prime\ndef is_prime(n):"

output = model.generate(

**tokenizer(prompt, return_tensors="pt").to("cuda"),

max_length=200

)

print(tokenizer.decode(output[0]))

Pros: Runs on modest hardware, fully commercial Apache license, fast inference, good Python performance for its size

Cons: 2K context window is very limiting, lower benchmark scores than larger models, primarily Python-focused, no FIM support

🔗 GitHub · Hugging Face

Full Comparison Table: Best Open Source Models for Coding in 2026

| Model | Size | License | HumanEval | MBPP | VRAM | Context | Best For |

|---|---|---|---|---|---|---|---|

| DeepSeek Coder 33B | 33B | MIT | 56.1% | 66.0% | ~40GB | 16K | Highest accuracy |

| Devstral Small 2 | 24B | Apache 2.0 | – | – | ~24GB | 256K | Agentic / multi-file |

| StarCoder2 15B | 15B | OpenRAIL | ~50% | ~62% | ~16GB | 16K | Balanced, multi-language |

| Code Llama 34B | 34B | Meta License | ~48% | ~55% | ~32GB | 100K | Long-context projects |

| Qwen3-Coder 30B | 30B | Apache 2.0 | N/A | N/A | ~32GB | 256K | Multilingual, agentic |

| Codestral 22B | 22B | MNPL | ~46% | ~57% | ~16GB | 32K | IDE autocomplete |

| CodeGen2.5 7B | 7B | Apache 2.0 | ~42% | ~55% | ~10GB | 2K | Low-resource / Python |

HumanEval and MBPP scores are pass@1 on Python. “N/A” = not yet independently published. License symbols: ✅ = commercial-friendly, ⚠️ = some restrictions, ❌ = no commercial use.

How to Choose the Right Model: Decision Flowchart

START: What's your primary goal?

│

├── 🎯 Maximum accuracy regardless of cost

│ └── DeepSeek Coder 33B (MIT license, best benchmarks)

│

├── 🤖 Building a coding agent or multi-file assistant

│ ├── Have 24GB+ VRAM? → Devstral Small 2 (68% SWE-bench)

│ └── Need 256K context? → Qwen3-Coder 30B (Apache 2.0)

│

├── ⚡ Fast IDE autocomplete (local)

│ ├── Commercial product? → StarCoder2 15B (OpenRAIL, FIM)

│ └── Research/personal only? → Codestral 22B (best FIM)

│

├── 📄 Working with huge files (100K+ tokens)

│ └── Code Llama 34B (100K context, free for most uses)

│

└── 💻 Limited GPU (under 16GB VRAM)

└── CodeGen2.5 7B (runs on ~10GB, Apache license)

Related Reading You Might Find Useful

If you’re evaluating AI tools for your workflow, these guides will save you a lot of time:

Understanding how AI retrieves and processes documents is crucial when building coding agents that reference documentation or codebases. Read this deep dive: Vectorless RAG with Page Index: The Future of Document Intelligence in 2026 – it explains how modern AI systems can navigate large documents without traditional vector databases, which directly applies to how coding agents like Devstral handle large codebases.

Choosing the right AI model for your specific task often comes down to testing. This honest comparison is worth your time: Claude vs Gemini for Document Analysis: I Tested Both – Here’s What Actually Happened (2026) – the testing methodology used there applies directly to how you should evaluate coding models too.

And if you’re worried about whether AI-generated code content will hurt your SEO (a legitimate concern), this study gives you real data: AI vs Human Content: Which Actually Ranks Better on Google in 2026? the answer might surprise you.

Frequently Asked Questions

Which are the best open source models for coding in Python in 2026?

DeepSeek Coder 33B leads on pure Python benchmark scores with 56.1% pass@1 on HumanEval. If you need it to run on less hardware, StarCoder2-15B achieves around 50% on HumanEval and runs on a single 16GB GPU. For Python-focused tasks on limited hardware, CodeGen2.5-7B is a solid Apache-licensed option.

Can I use these models commercially (in a product)?

Most of them, yes. DeepSeek Coder (MIT), Devstral Small 2 (Apache 2.0), StarCoder2 (OpenRAIL – check restrictions), Qwen3-Coder (Apache 2.0), and CodeGen2.5 (Apache 2.0) are all commercially usable. Codestral requires a paid commercial license from Mistral. Code Llama requires agreeing to Meta’s terms (allowed for most commercial use, but not OSI-standard).

What GPU do I need to run these models locally?

It depends on the model and quantization. A rough guide:

- RTX 3090 / 4090 (24GB): StarCoder2-15B in fp16, CodeGen2.5 comfortably, DeepSeek-33B in 4-bit quantized

- 2× A100 (80GB): DeepSeek 33B in fp16, Code Llama 34B

- RTX 3060 (12GB): CodeGen2.5-7B comfortably in fp16

- Apple M2/M3 Max (64GB unified): StarCoder2-15B and smaller models work well via llama.cpp

Are open-source coding models as good as GitHub Copilot?

For single-function completion in Python, yes – DeepSeek Coder 33B and Devstral Small 2 match or exceed Copilot on standard benchmarks. For repository-level understanding and IDE integration, GitHub Copilot still has edge in polish (better VS Code integration, faster cloud inference). But the gap is closing fast, and models like Devstral are specifically targeting multi-file reasoning.

What is HumanEval and why does it matter?

HumanEval is a benchmark developed by OpenAI consisting of 164 Python programming problems. The pass@1 score tells you the percentage of problems a model solves correctly on the first try. It’s the most widely used benchmark for comparing coding models, though it has limitations it’s Python-only and focuses on algorithm-style problems rather than real-world engineering tasks. For that, SWE-bench (used to evaluate Devstral) is more representative.

Which model works best for code explanation and documentation?

For explaining existing code and writing documentation, Qwen3-Coder and Devstral Small 2 excel because they can hold large amounts of existing code in context and reason about it step by step. Code Llama’s 100K context also makes it strong for this use case you can paste an entire module and ask for detailed documentation.

How do I run these models on a Mac?

Use llama.cpp or Ollama for Mac (especially M-series chips). Both support GGUF-format quantized versions of most models on this list. StarCoder2-15B and CodeGen2.5-7B run well on M2/M3 chips with 32GB+ unified memory. DeepSeek-Coder works with 4-bit quantization on M3 Max (128GB memory config).

Final Verdict

There’s no single answer when choosing the best open source models for coding in 2026 the right choice depends entirely on your hardware, use case, and license requirements. But here’s my honest summary after testing all of them:

For pure performance: DeepSeek Coder 33B is the benchmark leader and has the most permissive license of the high-performing models.

For real engineering tasks: Devstral Small 2 is in a different category when it comes to multi-file, multi-step reasoning. The 256K context and SWE-bench scores speak for themselves.

For practical daily use: StarCoder2-15B hits the sweet spot of performance, hardware requirements, and license clarity that most developers will find most workable.

For IDE autocomplete: Codestral 22B (if personal/research use) or StarCoder2 (if you need commercial use) are your go-to choices.

The open-source AI coding landscape in 2026 is genuinely exciting. These models are free, powerful, and improving monthly. The combination of Devstral’s agentic capabilities with DeepSeek’s raw accuracy means that for many tasks, you no longer need a paid subscription to get excellent AI coding assistance.

Last updated: April 2026. Benchmark scores reflect published results at time of writing. The AI model landscape changes rapidly – check linked GitHub repos for the most current evaluations.