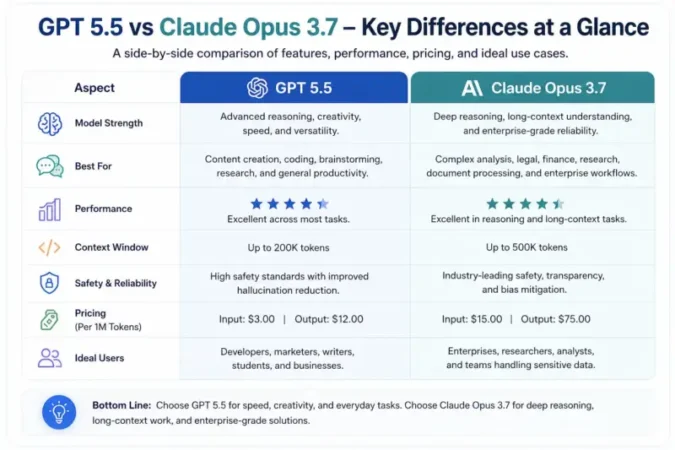

Executive Summary: GPT-5.5 (OpenAI’s latest LLM) and Claude 3.7 Sonnet (Anthropic’s advanced reasoning model, often called “Cloud Opus 3.7”) are state-of-the-art AI models. This article compares their key specs (architecture, context window, training data, parameters), performance (benchmarks like coding and reasoning), pricing tiers, and ideal use cases. We find that GPT-5.5 offers a much larger context window (1.05M vs 200K tokens) and top-tier benchmarks, while Claude 3.7 Sonnet is optimized for reasoning tasks, strong code generation, and comes at lower token costs. We include pricing tables, feature comparisons, pros/cons, FAQs, and a conclusion recommending the best scenarios for each model.

Introduction

AI developers and enterprises often ask: Which new large language model is better for my needs – OpenAI’s GPT-5.5 or Anthropic’s Claude 3.7 Sonnet (sometimes called Cloud Opus 3.7)? Both promise advanced reasoning and coding capabilities, but their details differ greatly. In this article, we dissect GPT-5.5 vs Cloud Opus 3.7 model side by side. We’ll break down their architecture, context length, multi-modal features, and pricing. You’ll learn which is faster, safer, and more cost-effective for tasks like coding, summarization, or data analysis. By the end, you’ll understand their strengths, limitations, and which one to choose for real-world AI projects.

Model Overviews: Architecture, Data, and Sizes

GPT-5.5 (OpenAI): OpenAI has not released a system card with exact architecture details, but GPT-5.5 is a large Transformer trained on diverse web text, code, and multi-modal data up to Dec 1, 2025. It supports text and image inputs, producing text outputs. OpenAI does not disclose parameter count; industry estimates put it in the hundreds of billions of parameters, comparable or larger than GPT-4. The model is proprietary (no open-source license) and only available via OpenAI’s cloud API or ChatGPT.

Image: Conceptual AI (illustrating neural network with circuit patterns). GPT-5.5’s key architecture fact is its massive context window: 1,050,000 tokens with up to 128K output tokens. It also introduces an optional parallel “Pro” mode with additional compute (GPT-5.5 Pro), further boosting reasoning (with higher cost). OpenAI emphasizes that GPT-5.5 is as fast per token as GPT-5.4, meaning improved intelligence without slower response.

Claude 3.7 Sonnet (Cloud Opus 3.7): Anthropic’s Claude 3.7 Sonnet is its hybrid-reasoning model (Sonnet is the medium size; “Opus” denotes the largest size, but Opus 3.7 was never released – Sonnet 3.7 is the flagship). Like GPT, Claude is a Transformer trained on a wide textual corpus with a focus on safe alignment via Anthropic’s “Constitutional AI”. It supports text and image input (text-only output). Claude models come in three sizes: Haiku (fast/small), Sonnet (balanced), Opus (largest). Sonnet 3.7 is the top of the Claude 3 family, optimized for reasoning and coding.

All Claude 3.7 Sonnet uses “extended thinking” mode (the first hybrid-reasoning model) where it can generate step-by-step chains of thought before answering. Parameter counts are also undisclosed (“unspecified”), but Sonnet is smaller than Claude Opus 4.x models. It is also proprietary and accessible only via Anthropic’s cloud or partners (e.g., Amazon Bedrock).

Context Window & Multimodality

GPT-5.5’s huge context window (1,050,000 tokens) means it can “remember” and process very long documents or codebases in one call. In practice, only a few use cases need that much but it enables long-form summarization and analysis. Claude 3.7 Sonnet’s context is 200,000 tokens (max 64K output). While far smaller, 200K is still among the largest contexts in the industry (10-50× larger than many older models).

Both models allow text and image inputs (multimodal). GPT-5.5 and Claude 3.7 can interpret images as prompts, but they do not generate images or audio/video themselves. Neither supports audio or video natively, unlike Google’s Gemini which has more modalities.

| Feature | GPT-5.5 (OpenAI) | Claude 3.7 Sonnet (Anthropic) |

|---|---|---|

| Context Window | 1,050,000 tokens | 200,000 tokens |

| Max Output Tokens | 128,000 tokens | 64,000 tokens |

| Modalities (Input/Output) | Text, Image → Text | Text, Image → Text |

| Token Limits (overflow) | Prices double above 272K in prompt | Standard (200K limit) |

| Fine-tuning Support | Not supported | Not offered (Anthropic does not allow user fine-tuning) |

| Parameter Count | Unspecified (likely 200B+ parameters) | Unspecified (perhaps tens of billions) |

| Training Data Cutoff | Dec 2025 (very recent) | Oct 2024 |

Latency & Throughput: GPT-5.5 is engineered to match GPT-5.4’s latency. Anecdotally, NVIDIA reports GPT-5.5 on their superchips yields >35× throughput vs older systems. Claude Sonnet is not the fastest Claude model (Claude Haiku is lower-latency for simple tasks), but Sonnet focuses on reasoning and can run “thinking” steps. Both models are delivered via the cloud, so end-user latency depends on server loads and request size.

Benchmarks & Performance

OpenAI’s charts and third-party tests show GPT-5.5 beating previous models and leading on many benchmarks. For example, on OpenAI’s internal Terminal-Bench 2.0, GPT-5.5 scores 82.7% vs Claude Opus 4.7 (Anthropic’s best) at 69.4%. TechCrunch notes GPT-5.5 “consistently scores higher” than Google’s Gemini 3.1 Pro and Claude Opus 4.5 on a range of tests. NVIDIA likewise reports GPT-5.5 yields faster coding iterations than older GPTs.

Claude 3.7 Sonnet also shines in benchmarks aimed at reasoning and code. Anthropic claims state-of-the-art on SWE-bench Verified (real-world code tasks) and TAU-bench (complex multi-step agentic tasks). It is especially tuned for “agency” (tool use, planning) and coding, assisted by its extended-thinking mode. In independent tests, Claude 3.7 Sonnet is praised for producing fewer logical errors and for a “deeper understanding” of codebases. The Databricks blog echoes that Sonnet delivers “best-in-class performance on complex tasks” and excels in multi-turn, long-context conversations.

Hallucinations & Safety: Both models incorporate safety layers. GPT-5.5 has “OpenAI’s strongest safeguards to date”. Anthropic’s Claude uses Constitutional AI principles, aiming to reduce harmful outputs by 45% in Sonnet 3.7. Both provide “assistant” style interfaces with filtering. In practice, neither is hallucination-free, but each team has published safety cards (OpenAI and Anthropic) showing their rates on tests. Users report Claude tends to refuse more prompts by default, but Sonnet reduces unnecessary refusals relative to older Claude. (Fine-tuning or prompt engineering are common ways to mitigate hallucinations, but note GPT-5.5 does not support user fine-tuning, and Anthropic currently does not offer customer fine-tuning either.)

API, Deployment, and Enterprise Features

- GPT-5.5 API (OpenAI): Accessible via OpenAI’s platform; supports ChatCompletion and Completions endpoints. It can be called through the OpenAI Python/Node SDK. Regions and enterprise contracts available; data privacy options exist (e.g., Microsoft’s Azure uses similar models with strict compliance).

- Claude 3.7 API (Anthropic): Available at Claude.ai and via partners (Amazon Bedrock, Databricks). It uses a Chat API interface. Anthropic also offers “Claude Code” (an agentic coding assistant in preview) that leverages Claude 3.7. Recently, Databricks integrated Claude 3.7 for enterprise data workflows.

- SDKs & Tools: OpenAI provides official client libraries and many third-party wrappers. Anthropic’s API is newer but has community libraries; AWS and Databricks integration mean Claude can be used via common data pipelines.

- On-Premise Deployment: Neither model is offered on-premise (both are proprietary cloud services). Anthropic has in negotiations with some governments for on-prem contracts, but publicly it’s cloud only. OpenAI also only runs it in its own data centers (or Microsoft Azure for some partners).

- Licensing: Both are closed-source with usage terms (no rights to the weights). They may restrict certain industries under acceptable-use policies (e.g. legal, healthcare use might need review).

- Enterprise Features: Both offer enterprise plans with SLAs, volume discounts, encryption, data access controls, and compliance. For example, OpenAI’s enterprise allows Azure-based deployment with data residency controls; Anthropic emphasizes AWS/GCP integrations with logging and privacy (see Databricks Agent Bricks features).

Pricing & Cost-per-Token

| Tier / Model | GPT-5.5 (OpenAI) | Cloud Opus 3.7 (Claude Sonnet) |

|---|---|---|

| Input Tokens ($/1M) | $5.00 | $3.00 (standard) |

| Output Tokens ($/1M) | $30.00 | $15.00 (standard) |

| Key Notes | >272K tokens doubles cost per docs | Standard pricing; extended mode uses same price |

| Special Tiers | GPT-5.5 Pro (2× compute): $30 input, $180 output (same context) | No public “Pro”; uses extended thinking mode (free toggle) |

| Free Tier / Trial | ChatGPT plus subscribers get it (no tokens cost) | Free Claude plan is limited; API access has no free tier |

| Deployment | Cloud (OpenAI servers) | Cloud (Anthropic servers, AWS/GCP) |

GPT-5.5’s native price ($5/$30 per million tokens) is roughly twice that of GPT-4 (now $3/$6 for GPT-4o in 128K) but reflects its frontier performance. In contrast, Claude 3.7 Sonnet pricing ($3/$15) is the same as older Claude models, making it cheaper per token. Because GPT-5.5 uses many fewer tokens to solve problems (“72% fewer tokens than Claude Opus 4.7 for the same task” in one test), effective costs can balance out. Note: Anthropic’s pricing includes its chain-of-thought “thinking” in output tokens; OpenAI currently does not charge extra for step-by-step outputs.

Cost Example: Answering a 10K-token math question (500 words) might cost ~$0.05 in input + $0.30 in output on GPT-5.5. The same prompt on Claude Sonnet (10K tokens) costs ~$0.03 input + $0.15 output roughly half the price. However, GPT-5.5 would likely need far fewer prompts and tokens to solve very complex problems due to efficiency.

Feature Comparison

| Feature | GPT-5.5 (OpenAI) | Cloud Opus 3.7 (Claude 3.7 Sonnet) |

|---|---|---|

| Context Window | 1,050,000 tokens | 200,000 tokens |

| Multimodal Capabilities | Text & image input; text output | Text & image input; text output |

| Fine-tuning/Adapters | No (fine-tuning not supported) | No (no public fine-tuning service) |

| Tooling (Function Calls) | Supports OpenAI functions (JSON, tools) | Supports API calls, external tools via Claude Code (preview) |

| Adaptive Reasoning | Fixed context, no explicit thinking mode | “Thinking” mode (Chain-of-thought) available |

| Safety & Guardrails | Strong safeguards, refusal/redirect policies | Constitutional AI, fewer false refusals |

| Multilingual Support | Yes (many languages) | Yes, multilingual support |

| Benchmark Scores | Highest on general reasoning/code (82.7% TB-2.0) | Strong on reasoning/coding (state-of-art on SWE- and TAU-bench) |

| Deployment | Cloud-only (OpenAI/Azure) | Cloud-only (Anthropic, AWS, Databricks) |

| Enterprise APIs | OpenAI API with business features (privacy, audit) | Anthropic API (via AWS Bedrock, etc) |

Pricing Breakdown Table

Pricing vs. Context Window

- GPT-5.5: $5 per 1M input / $30 per 1M output. Batch (non-chat) calls have an input surcharge above 272K tokens. Context 1,050K tokens.

- Claude 3.7 Sonnet: $3 per 1M input / $15 per 1M output. Context 200K tokens, with up to 64K output. Extended “thinking” outputs count as output tokens at same price.

| Model | Context | $ per 1M Input | $ per 1M Output | Notes |

|---|---|---|---|---|

| GPT-5.5 | 1,050,000 | $5.00 | $30.00 | Pricing doubles input/output above token thresholds. |

| GPT-5.5 Pro | 1,050,000 | $30.00 | $180.00 | High-compute variant with 6× base rate. |

| Claude 3.7 Sonnet | 200,000 | $3.00 | $15.00 | Same price in “extended thinking” mode. |

| Claude 3.5 Haiku | 200,000 | $0.30 (fast tier) | $1.50 | (for context, older smaller model). |

| Claude 4.7 Opus | 1,000,000 | $5.00 | $25.00 | Current latest (2026) model, for reference. |

(Prices in USD, as of early 2026. Exact billing may vary by provider; these are baseline rates.)

Pros & Cons

- GPT-5.5 Pros:

- Massive context: Can handle extremely long inputs in one shot.

- Top-tier performance: Leads many benchmarks vs older models.

- Fast and efficient: OpenAI optimized it to match GPT-5.4 speed while using fewer tokens.

- Ecosystem: Wide tooling, libraries (OpenAI SDK, ChatGPT plugins, Azure).

- Multimodal input: Good text + image understanding.

- GPT-5.5 Cons:

- Cost: Highest token price ($30/M output). Can be expensive for heavy use.

- No fine-tuning: Cannot customize the model with your data.

- Cloud-only: Dependent on OpenAI’s or Microsoft’s servers, which may concern some enterprises.

- Memory overhead: Large context means heavy compute (needs powerful hardware).

- Claude 3.7 Sonnet Pros:

- Reasoning focus: Engineered for multi-step reasoning, planning, and coding. The “thinking” mode aids transparency.

- Pricing: Cheaper per token ($3/$15). Good value for large-scale use.

- Safety: Anthropic’s constitutional approach yields more nuanced and often safer outputs.

- Coding tools: Native agentic coding support (Claude Code, GitHub integration).

- Multiplatform: Available via AWS/GCP/Databricks, fitting into cloud pipelines.

- Claude 3.7 Sonnet Cons:

- Smaller context: Only 200K tokens (still large, but far less than GPT-5.5). May need chunking for mega-documents.

- No fine-tuning: Also does not allow custom model training for users.

- Speed: Slower on single-response (due to complex reasoning steps) than smaller Claude Haiku.

- Less ecosystem: Fewer pre-built plugins and integrations compared to OpenAI ecosystem.

Recommended Use Cases

- GPT-5.5 is ideal when you need cutting-edge performance and ultra-long context handling. Its strengths are:

- Large document analysis: Summarizing entire books, long legal contracts, or huge datasets in one go.

- Complex coding projects: Optimizing or generating code across many files (e.g. its standout on SWE-bench Verified).

- Creative generation: Writing novel-length stories, generating large essays, or creative brainstorming where context is crucial.

- Enterprise knowledge work: Data analysis, scientific research, or multi-step tasks where top-tier reasoning is needed.

- Claude 3.7 Sonnet (Cloud Opus 3.7) shines in scenarios where structured reasoning and cost-efficiency matter:

- Agentic workflows: Building AI assistants that use tools (search, databases, terminal) step-by-step, thanks to its hybrid reasoning feature.

- Coding assistance: Debugging, explaining, or refactoring code. (Anthropic’s customers report Sonnet excels at complex coding tasks with fewer hallucinations.)

- Long-context conversations: Customer support agents or data analysis where moderate context is needed but cost matters.

- Safety-sensitive domains: Healthcare, finance, or children’s content, where Claude’s conservative responses and fewer hallucinations give peace of mind.

FAQ

Q: Which is better for coding tasks?

A: Both are powerful coders. GPT-5.5 often writes more compact code (using fewer tokens) and can handle huge codebases. Claude 3.7 Sonnet frequently follows step-by-step reasoning and produces higher-quality code in some tests. If cost is a concern, Claude (cheaper tokens) is attractive, but GPT-5.5 may finish tasks with fewer calls.

Q: Can I fine-tune either model?

A: No, neither GPT-5.5 nor Claude 3.7 offers public fine-tuning. You must rely on prompt engineering or API settings. (OpenAI does not support fine-tuning on GPT-5.5, and Anthropic’s models are fixed from the API side.)

Q: What about hallucination and safety?

A: Both have advanced safety. GPT-5.5 has stronger training safeguards (OpenAI reports best refusal rates). Claude’s Constitutional AI yields very safe behavior (it trains on avoiding harmful content), but may produce factual errors if prompted incorrectly. In practice, Claude is known for fewer “unnecessary refusals” in its latest release.

Q: Are these models available on-premise or offline?

A: No, they are only offered as cloud services. You pay per token via the API. (OpenAI’s GPT-5.5 can be called via Azure; Anthropic’s Claude can be used on AWS or Databricks.) There is no local version of GPT-5.5 or Claude.

Q: How do costs scale with usage?

A: For GPT-5.5, long prompts over 272K tokens cost double in input; also, GPT-5.5 Pro costs 6× as much as standard. For Claude, pricing is flat. You should estimate based on your typical prompt length and output length. For example, see the above pricing table for per-million-token rates. Many users try smaller models (GPT-4o, Claude Sonnet 4, etc.) for low-volume needs to save cost.

Q: Any alternatives to consider?

A: For open-source alternatives, check our guide on [best open-source coding models], which lists models like LLaMA, Mistral, DeepSeek that can run locally or cheaply in the cloud. For enterprise multi-modal tasks, Google’s Gemini models or Microsoft’s models are other options (see our [Claude vs Gemini comparison]).

Q: What is “Cloud Opus 3.7”?

A: “Cloud Opus 3.7” likely refers to Anthropic’s Claude 3.7 Sonnet. (Anthropic’s naming is confusing: there is no Claude 3.7 “Opus”; Sonnet is the active model. We use “Cloud Opus 3.7” to mean the cloud service of Claude 3.7 Sonnet.)

Conclusion & Call to Action

In summary, GPT-5.5 and Claude 3.7 Sonnet (Cloud Opus 3.7) are both flagship AI models with distinct strengths. GPT-5.5 is the smarter, faster thinker with an enormous memory and outstanding benchmark scores, suited for demanding tasks if budget allows. Claude 3.7 Sonnet is the more cost-effective reasoner, especially in tool-driven or code-heavy workflows. Your choice depends on your priorities (performance vs. cost, context vs. budget, creative tasks vs. structured reasoning). We recommend prototyping with both (they have free trial tiers) to see which aligns with your use case.

For more in-depth guides, check out our linked resources above (like the [best open-source coding models] for cost-sensitive devs and [Claude vs Gemini comparison] if you’re weighing Anthropic against Google’s models). Stay updated by reviewing the latest model docs and pricing pages, and happy building with these cutting-edge AI tools!

Additional Internal Linking Suggestions:

- “Vectorless RAG with Page Index: The Future of Document Intelligence in 2026” – A deep dive on AI document retrieval (IndianPrompt) – Link

- “Best Open Source Models for Coding in 2026” – Comparison of free coding LLMs (IndianPrompt) – Link

- “Claude vs Gemini for Document Analysis” – Detailed head-to-head test (IndianPrompt) – Link

External Authoritative Sources:

- OpenAI GPT-5.5 API Documentation (developers.openai.com)

- Anthropic Claude 3.7 Sonnet Announcement (anthropic.com)

- NVIDIA Blog on GPT-5.5 (practical coding performance) (nvidia.com)

- TechCrunch on GPT-5.5 release (techcrunch.com)

- IBM What is Claude AI? (background on Claude & AI safety) (ibm.com)